Just want to clarify, this is not my Substack, I’m just sharing this because I found it insightful.

The author describes himself as a “fractional CTO”(no clue what that means, don’t ask me) and advisor. His clients asked him how they could leverage AI. He decided to experience it for himself. From the author(emphasis mine):

I forced myself to use Claude Code exclusively to build a product. Three months. Not a single line of code written by me. I wanted to experience what my clients were considering—100% AI adoption. I needed to know firsthand why that 95% failure rate exists.

I got the product launched. It worked. I was proud of what I’d created. Then came the moment that validated every concern in that MIT study: I needed to make a small change and realized I wasn’t confident I could do it. My own product, built under my direction, and I’d lost confidence in my ability to modify it.

Now when clients ask me about AI adoption, I can tell them exactly what 100% looks like: it looks like failure. Not immediate failure—that’s the trap. Initial metrics look great. You ship faster. You feel productive. Then three months later, you realize nobody actually understands what you’ve built.

The developers can’t debug code they didn’t write.

This is a bit of a stretch.

agreed. 50% of my job is debugging code I didn’t write.

Vibe coders can’t debug code because they didn’t write

Vibe coders can’t debug code because they can’t write code

I mean I was trying to solve a problem t’other day (hobbyist) - it told me to create a

function foo(bar): await object.foo(bar)

then in object

function foo(bar): _foo(bar)

function _foo(bar): original_object.foo(bar)

like literally passing a variable between three wrapper functions in two objects that did nothing except pass the variable back to the original function in an infinite loop

add some layers and complexity and it’d be very easy to get lost

The few times I’ve used LLMs for coding help, usually because I’m curious if they’ve gotten better, they let me down. Last time it was insistent that its solution would work as expected. When I gave it an example that wouldn’t work, it even broke down each step of the function giving me the value of its variables at each step to demonstrate that it worked… but at the step where it had fucked up, it swapped the value in the variable to one that would make the final answer correct. It made me wonder how much water and energy it cost me to be gaslit into a bad solution.

How do people vibe code with this shit?

As a learning process it’s absolutely fine.

You make a mess, you suffer, you debug, you learn.

But you don’t call yourself a developer (at least I hope) on your CV.

I cannot understand and debug code written by AI. But I also cannot understand and debug code written by me.

Let’s just call it even.

At least you can blame yourself for your own shitty code, which hopefully will never attempt to “accidentally” erase the entire project

We’re about to face a crisis nobody’s talking about. In 10 years, who’s going to mentor the next generation? The developers who’ve been using AI since day one won’t have the architectural understanding to teach. The product managers who’ve always relied on AI for decisions won’t have the judgment to pass on. The leaders who’ve abdicated to algorithms won’t have the wisdom to share.

Except we are talking about that, and the tech bro response is “in 10 years we’ll have AGI and it will do all these things all the time permanently.” In their roadmap, there won’t be a next generation of software developers, product managers, or mid-level leaders, because AGI will do all those things faster and better than humans. There will just be CEOs, the capital they control, and AI.

What’s most absurd is that, if that were all true, that would lead to a crisis much larger than just a generational knowledge problem in a specific industry. It would cut regular workers entirely out of the economy, and regular workers form the foundation of the economy, so the entire economy would collapse.

“Yes, the planet got destroyed. But for a beautiful moment in time we created a lot of value for shareholders.”

Yep, and now you know why all the tech companies suddenly became VERY politically active. This future isn’t compatible with democracy. Once these companies no longer provide employment their benefit to society becomes a big fat question mark.

They never actually say what “product” do they make, it’s always “shipped product” like they’re fucking amazon warehouse. I suspect because it’s some trivial webpage that takes an afternoon for a student to ship up, that they spent three days arguing with an autocomplete to shit out.

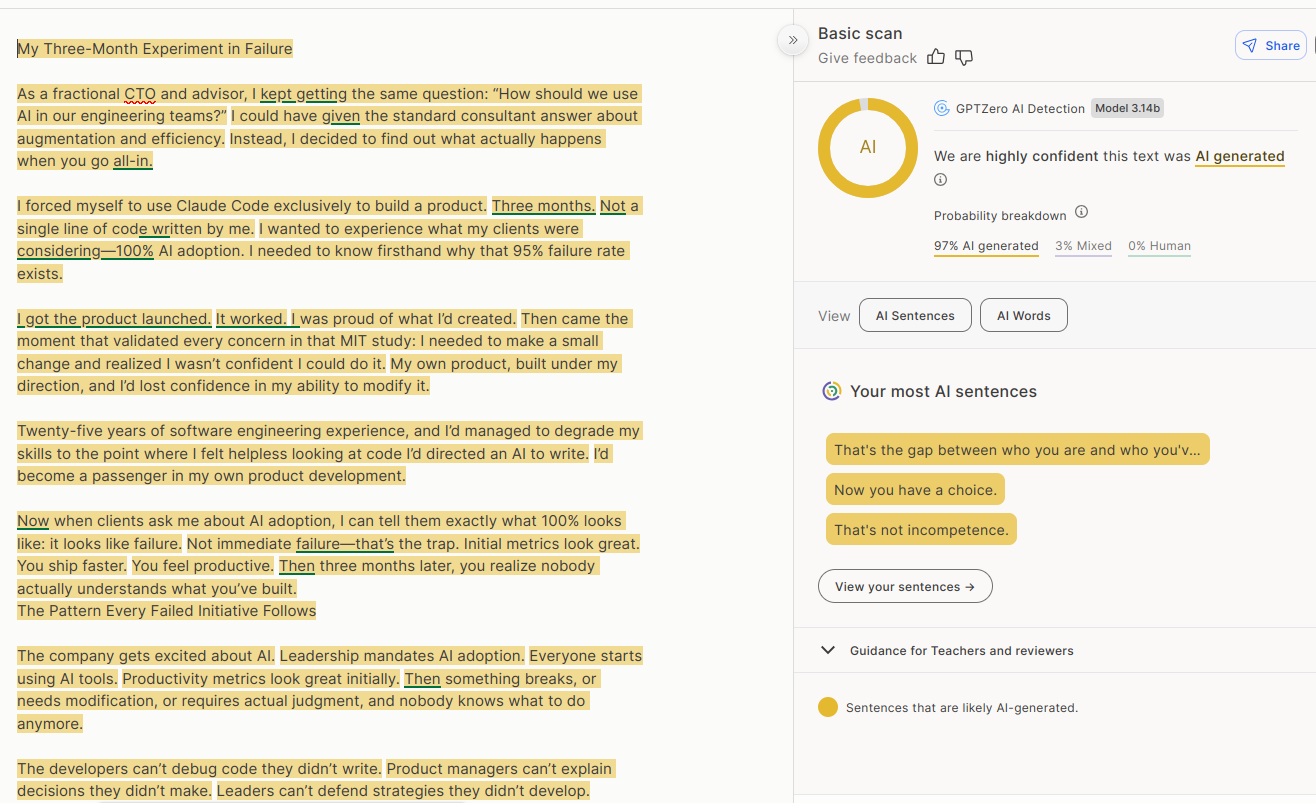

FYI this article is written with a LLM.

Don’t believe a story just because it confirms your view!

I’ve heard that these tools aren’t 100% accurate, but your last point is valid.

To quote your quote:

I got the product launched. It worked. I was proud of what I’d created. Then came the moment that validated every concern in that MIT study: I needed to make a small change and realized I wasn’t confident I could do it. My own product, built under my direction, and I’d lost confidence in my ability to modify it.

I think the author just independently rediscovered “middle management”. Indeed, when you delegate the gruntwork under your responsibility, those same people are who you go to when addressing bugs and new requirements. It’s not on you to effect repairs: it’s on your team. I am Jack’s complete lack of surprise. The idea that relying on AI to do nuanced work like this and arrive at the exact correct answer to the problem, is naive at best. I’d be sweating too.

“fractional CTO”(no clue what that means, don’t ask me)

For those who were also interested to find out: Consultant and advisor in a part time role, paid to make decisions that would usually fall under the scope of a CTO, but for smaller companies who can’t afford a full-time experienced CTO

That sounds awful. You get someone who doesn’t really know the company or product, they take a bunch of decisions that fundamentally affect how you work, and then they’re gone.

… actually, that sounds exactly like any other company.

Personally I tried using LLMs for reading error logs and summarizing what’s going on. I can say that even with somewhat complex errors, they were almost always right and very helpful. So basically the general consensus of using them as assistants within a narrow scope.

Though it should also be noted that I only did this at work. While it seems to work well, I think I’d still limit such use in personal projects, since I want to keep learning more, and private projects are generally much more enjoyable to work on.

Another interesting use case I can highlight is using a chatbot as documentation when the actual documentation is horrible. However, this only works within the same ecosystem, so for instance Copilot with MS software. Microsoft definitely trained Copilot on its own stuff and it’s often considerably more helpful than the docs.

Fractional CTO: Some small companies benefit from the senior experience of these kinds of executives but don’t have the money or the need to hire one full time. A fraction of the time they are C suite for various companies.

Sooo… he works multiple part-time jobs?

Weird how a forced technique of the ultra-poor is showing up here.

It’s more like the MSP IT style of business. There are clients that consult you for your experience or that you spend a contracted amount of time with and then you bill them for your time as a service. You aren’t an employee of theirs.

AI is really great for small apps. I’ve saved so many hours over weekends that would otherwise be spent coding a small thing I need a few times whereas now I can get an AI to spit it out for me.

But anything big and it’s fucking stupid, it cannot track large projects at all.

What kind of small things have you vibed out that you needed?

Not OP but I made a little menu thing for launching VMs and a script for grabbing trailers for downloaded movies that reads the name of the folder, finds the trailer and uses yt-dlp to grab it, puts it in the folder and renames it.

I’m curious about that too since you can “create” most small applications with a few lines of Bash, pipes, and all the available tools on Linux.

Maybe they don’t run Linux. 🤭

Encryption, login systems and pricing algorithms. Just the small annoying things /s

FWIW that’s a good question but IMHO the better question is :

What kind of small things have you vibed out that you needed that didn’t actually exist or at least you couldn’t find after a 5min search on open source forges like CodeBerg, Gitblab, Github, etc?

Because making something quick that kind of works is nice… but why even do so in the first place if it’s already out there, maybe maintained but at least tested?

So if it can be vibe coded, it’s pretty much certainly already a “thing”, but with some awkwardness.

Maybe what you need is a combination of two utilities, maybe the interface is very awkward for your use case, maybe you have to make a tiny compromise because it doesn’t quite match.

Maybe you want a little utility to do stuff with media. Now you could navigate your way through ffmpeg and mkvextract, which together handles what you want, with some scripting to keep you from having to remember the specific way to do things in the myriad of stuff those utilities do. An LLM could probably knock that script out for you quickly without having to delve too deeply into the documentation for the projects.

Since you put such emphasis on “better”: I’d still like to have an answer to the one I posed.

Yours would be a reasonable follow-up question if we noticed that their vibed projects are utilities already available in the ecosystem. 👍

AI is hot garbage and anyone using it is a skillless hack. This will never not be true.

Wait so I should just be manually folding all these proteins?

Do you not know the difference between an automated process and machine learning?

The thing with being cocky is, if you are wrong it makes you look like an even bigger asshole

https://en.wikipedia.org/wiki/AlphaFold

The program uses a form of attention network, a deep learning technique that focuses on having the AI identify parts of a larger problem, then piece it together to obtain the overall solution.

Cool, now do an environmental impact on it.

Cool, now do an environmental impact on the data centre hosting your instance while you pollute by mindlessly talking shit on the Internet.

I’ll take AI unfolding proteins over you posting any day.

Hilarious. You’re comparing a lemmy instance to AI data centers. There’s the proof I needed that you have no fucking clue what you’re talking about.

“bUt mUh fOLdeD pRoTEinS,” said the AI minion.

Just ask the ai to make the change?

AI isn’t good at changing code, or really even understanding it… It’s good at writing it, ideally 50-250 lines at a time

Something any (real, trained, educated) developer who has even touched AI in their career could have told you. Without a 3 month study.

What’s funny is this guy has 25 years of experience as a software developer. But three months was all it took to make it worthless. He also said it was harder than if he’d just wrote the code himself. Claude would make a mistake, he would correct it. Claude would make the same mistake again, having learned nothing, and he’d fix it again. Constant firefighting, he called it.

As someone who has been shoved in the direction of using AI for coding by my superiors, that’s been my experience as well. It’s fine at cranking out stackoverflow-level code regurgitation and mostly connecting things in a sane way if the concept is simple enough. The real breakthrough would be if the corrections you make would persist longer than a turn or two. As soon as your “fix-it prompt” is out of the context window, you’re effectively back to square one. If you’re expecting it to “learn” you’re gonna have a bad time. If you’re not constantly double checking its output, you’re gonna have a bad time.

@felbane @AutistoMephisto i don’t have a cs degree (and am more than willing to accept the conclusions of this piece) but how is it not viable to audit code as it’s produced so as it’s both vetted and understood in sequence?

It’s still useful to have an actual “study” (I’d rather call it a POC) with hard data you can point to, rather than just “trust me bro”.

Not immediate failure—that’s the trap. Initial metrics look great. You ship faster. You feel productive.

And all they’ll hear is “not failure, metrics great, ship faster, productive” and go against your advice because who cares about three months later, that’s next quarter, line must go up now. I also found this bit funny:

I forced myself to use Claude Code exclusively to build a product. Three months. Not a single line of code written by me… I was proud of what I’d created.

Well you didn’t create it, you said so yourself, not sure why you’d be proud, it’s almost like the conclusion should’ve been blindingly obvious right there.

The top comment on the article points that out.

It’s an example of a far older phenomenon: Once you automate something, the corresponding skill set and experience atrophy. It’s a problem that predates LLMs by quite a bit. If the only experience gained is with the automated system, the skills are never acquired. I’ll have to find it but there’s a story about a modern fighter jet pilot not being able to handle a WWII era Lancaster bomber. They don’t know how to do the stuff that modern warplanes do automatically.

Great article, brave and correct. Good luck getting the same leaders who blindly believe in a magical trend for this or next quarters numbers; they don’t care about things a year away let alone 10.

I work in HR and was stuck by the parallel between management jobs being gutted by major corps starting in the 80s and 90s during “downsizing” who either never replaced them or offshore them. They had the Big 4 telling them it was the future of business. Know who is now providing consultation to them on why they have poor ops, processes, high turnover, etc? Take $ on the way in, and the way out. AI is just the next in long line of smart people pretending they know your business while you abdicate knowing your business or employees.

Hope leaders can be a bit braver and wiser this go 'round so we don’t get to a cliffs edge in software.

Tbh I think the true leaders are high on coke.