The paper is more rigorous with language but can be a slog.

The paper is more rigorous with language but can be a slog.

ANIMA! Moving Mountains is my favorite.

Anthropic has some similar findings, and they propose an architectural change (activation capping) that apparently helps keep the Assistant character away from dark traits (sometimes). But it hasn’t been implemented in any models, I assume because of the cost of scaling it up.

Imagination

with the AI thing

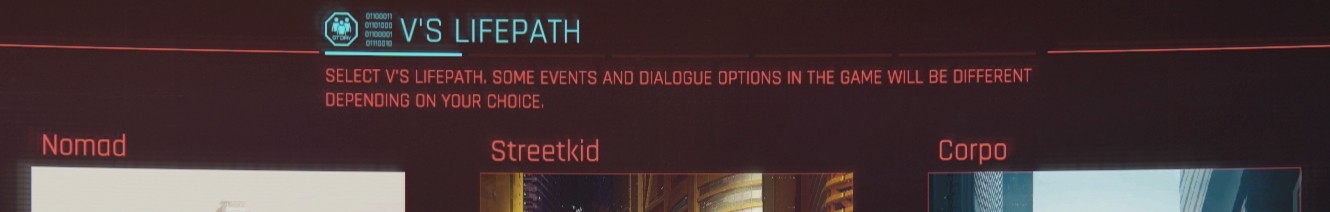

I feel like I’m stuck at this screen.

Yeah, the rate of posts here is such that I can check in once or twice a day and see pretty much everything. So if spending less time on social media is healthier…

Between 2020 and 2022, the US government found that over 300 US companies in multiple industries, including several Fortune 500 companies, had unknowingly employed these workers, indicating the magnitude of this threat.

So AI’s just making some things easier now.

North Korean remote IT workers require assistance from a witting facilitator to help find jobs, pass the employment verification process, and once hired, successfully work remotely. - Microsoft

I was gonna say… how do they deal with the HR paperwork and getting paid? They need Americans or at least people from other countries to help.

You got a genuine laugh outta me. Well put.

They keep trying to, but Hegseth formally designated them a supply chain risk just a few hours ago, right after using Claude in Iran.

and yet still preferable to OpenAI, Google, and xAI.

It’s been a long road, gettin’ from there to here.

The past and future. The present is hit and miss.

The bar is so low. Anthropic is only trying to raise it ever so slightly.

e: Also, the US military reportedly used Claude in Iran strikes despite Trump’s ban.

Aye, Anthropic is head and shoulders above everyone else on guidance, largely because they focus entirely on text/code. They’re not simultaneously developing image, video, and audio generators. Even Claude’s voice is just an 11Labs model. Plus I get the impression they’re just smarter about what they choose to research and how they use that info to improve the model.

I did find an update on that funding, btw. Anthropic already took money from Qatar (the QIA), but the amount isn’t known - likely around $100M. The UAE has yet to happen, but if does, it would be “hundreds of millions”.

I mean, I’m not gonna defend him. But fucking up a discord that you’re a mod of isn’t really in the same ballpark as taking money from dictators or directing fully autonomous strikes. Also, from the read, it really sounds like that Deputy CISO was a prime example of cyber-psychosis, or AI mania, or whatever we’ve decided to call it. And I assume he is part of the same vulnerable minority?

Oh, that guy! To be fair, that’s one employee, not Anthropic’s actions or position. You mentioned forcing their software on minorities while insisting it was better than it was, and I was getting OLPC flashbacks. But Anthropic looking for funding in the UAE and Qatar is shitty. I can’t seem to find anything about whether or not they went through with those contracts.

They insisted Claude was human?

As a video producer, the AI baked into the Adobe suite is very useful (generative fill, harmonize, and neural filters in Photoshop, generative extend and AI noise reduction in Premiere, lots of older stuff in After Effects).

As far as LLMs go, I get a lot out of talking through things with Claude, or coding silly little toys that only matter to me. But I’d never trust an agent with tools or access. And Anthropic’s own research is a good place to start for why that won’t change anytime soon.